The Emergence of AI-Generated Disinformation

The increasing sophistication of artificial intelligence has initiated a new phase in the world of online disinformation, particularly exemplified by Russia's recent campaigns. The ability to fabricate narratives through realistic video representations is alarming, not just for the immediate political context, but for the broader implications on digital trust and governance.

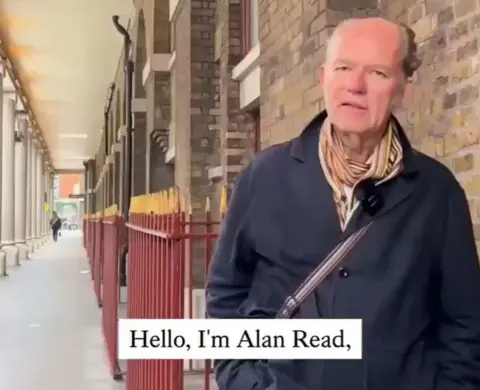

A Case Study: The Manipulated Persona of Dr. Alan Read

One particularly disturbing instance involves a King's College professor, Alan Read, whose likeness was exploited in a politicized AI-generated video. The video features a synthetic voice mimicking his own, spewing rhetoric unattached to his actual views. This manipulation highlights the capacity for misinformation to masquerade as credible content, complicating the landscape for untrained viewers.

“What we've seen is a shift in how influence is produced,” commented Chris Kremidas-Courtney, a defense analyst at the European Policy Centre. “We face systems that can generate persuasion at scale, for pennies.”

The Broader Threat Landscape

AI-generated disinformation campaigns have surged as we witness a troubling intersection between technology and geopolitics. The new capability to produce high-quality videos has emboldened various actors to launch sophisticated information operations. This trend has arisen in the context of heightened geopolitical tensions and ongoing conflicts, with AI capabilities enabling adversaries to subvert democratic processes at unprecedented scales.

Current Countermeasures and Their Limitations

As AI technologies evolve, so too must the strategies employed to combat them. Currently, frameworks governing online content remain ill-equipped to handle this new wave of fabricated media. Legal exigencies around foreign influence and misinformation often lag behind technological advancements. For instance, Britain's Online Safety Act provides minimal recourse against disinformation, obliging platforms to act only upon proof of foreign interference—often a complex and lengthy process.

“The posts are hard to trace to their origins, yet many exhibit similar stylistic cues,” researchers say, affirming widespread concerns regarding the Kremlin's disinformation operations.

A Closer Look at Operations Like Matryoshka

Some campaigns, such as Operation Matryoshka, encapsulate common tactics employed in disinformation. This method entangles original false narratives within layers of reposts from seemingly unrelated accounts, complicating efforts to address falsehoods. Unlike conventional outlets like RT and Sputnik, these operations afford a level of plausible deniability, making counter-influence efforts particularly challenging.

The Urgency of Establishing Robust Safeguards

Experts continue to emphasize the urgent need for both governmental and institutional frameworks to adapt to this new reality. As of late 2025, European officials began discussing countermeasures to mitigate the risks posed by AI-driven misinformation, acknowledging that existing systems are insufficient to safeguard democratic discussions.

Disinformation's Global Ripple Effect

Beyond individual cases, the ramifications of AI-enhanced disinformation extend globally. For instance, a series of AI-generated videos recently provoked political unrest in Poland, showing young women advocating for “Polexit”—the concept of Poland leaving the EU—leading to widespread calls for investigation into the origins of such content.

“There is no doubt that this is Russian disinformation,” adamantly stated Polish government spokesman Adam Szlapka.

The Path Forward: Enhancing Awareness and Creating Solutions

The need for public awareness and education about AI-generated content will be paramount moving forward. As we step into an era where the line between real and fabricated content becomes increasingly blurred, a concerted effort towards transparency in digital media practices will be essential. Governments, tech firms, and civil actors must work in unison to develop both technological solutions for content verification and legal frameworks that adequately address the speed of misinformation dissemination.

Conclusion: A Call to Action

The rise of AI-generated disinformation presents an existential challenge to information integrity and democratic governance. As we navigate this new reality shaped by technological advances, proactive engagement on multiple fronts can help mitigate the influence of such campaigns and secure the truth in our digital narratives.

Key Facts

- AI in Disinformation: Russia is increasingly using AI-generated videos for online disinformation.

- Dr. Alan Read Incident: AI-generated content manipulated the likeness and voice of King's College professor Alan Read.

- Operation Matryoshka: Operation Matryoshka employs layers of reposts to mask disinformation origins.

- Geopolitical Impact: AI-generated disinformation has affected political dynamics, including unrest in Poland.

- Regulatory Limitations: Current laws like Britain's Online Safety Act are not adequate against AI-driven disinformation.

Background

Russia's deployment of AI-generated videos has initiated a new phase in online disinformation, complicating governance and trust in digital media. The rapid proliferating capabilities of AI raise concerns over the security and integrity of democratic processes globally.

Quick Answers

- What is the role of AI in Russia's disinformation?

- Russia is increasingly using AI-generated videos to create online disinformation campaigns.

- Who is Dr. Alan Read?

- Dr. Alan Read is a King's College professor whose likeness was manipulated in an AI-generated video.

- What is Operation Matryoshka?

- Operation Matryoshka involves using layers of reposts to obscure the origins of disinformation.

- How has AI disinformation affected Poland?

- AI-generated videos stirred political unrest in Poland, leading to calls for an investigation.

- What are the limitations of current disinformation laws?

- Current regulations like Britain's Online Safety Act are not effectively equipped to handle AI-generated disinformation.

Frequently Asked Questions

What incident involved Dr. Alan Read and AI?

Dr. Alan Read's likeness was manipulated in an AI-generated video that misrepresented his views.

What are the risks associated with AI-generated videos?

AI-generated videos pose risks by complicating efforts to trace disinformation origins and faking credible content.

How has the EU responded to AI-driven misinformation?

European officials began discussing countermeasures in late 2025 to address risks posed by AI misinformation.

Source reference: https://www.bbc.com/news/articles/cx2r7grrdwzo

Comments

Sign in to leave a comment

Sign InLoading comments...